This will take the CSS selector as a parameter and return an instance of Elements, which is an extension of the type ArrayList.

If multiple elements need to be selected, you can use the select() method. In this example, selectFirst() method was used. The pom.xml file would look something like this:Įlement firstHeading = document. In the pom.xml (Project Object Model) file, add a new section for dependencies and add a dependency for JSoup. If you do not want to use Maven, head over to this page to find alternate downloads. Use any Java IDE, and create a Maven project. The first step of web scraping with Java is to get the Java libraries. Let’s examine this library to create a Java website scraper.īroadly, there are three steps involved in web scraping using Java. JSoup is perhaps the most commonly used Java library for web scraping with Java. Now let’s review the libraries that can be used for web scraping with Java. Selects any element with class “new”, which are inside

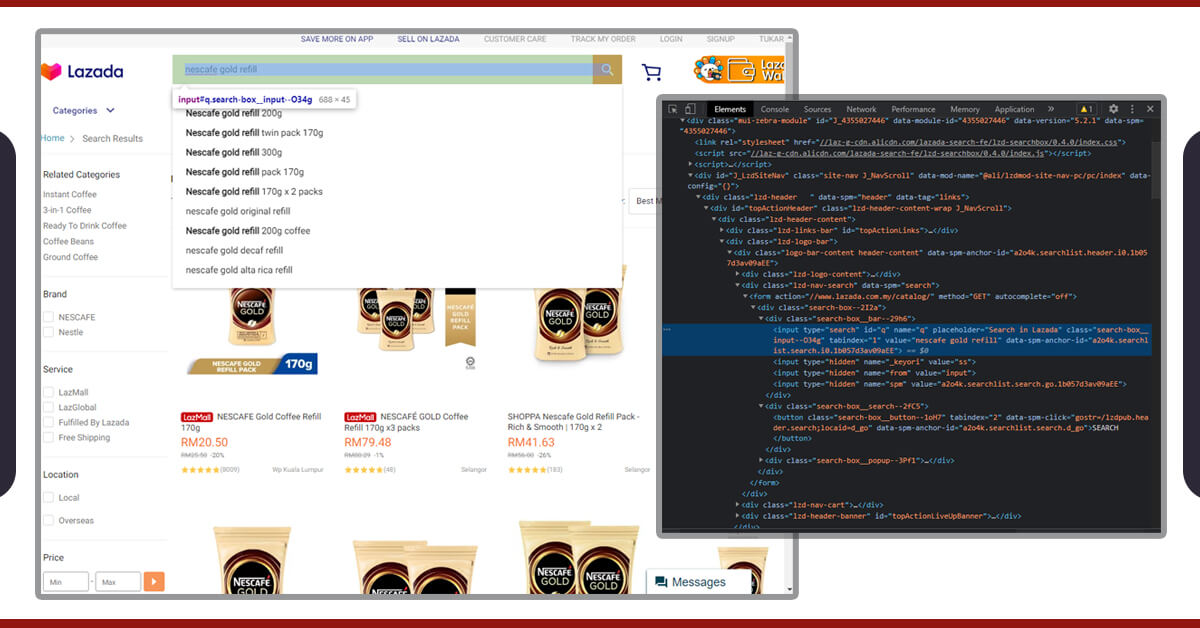

P.link.new – Note that there is no space here. blue – selects any element where class contains “blue”ĭiv#firstname – select div elements where id equals “firstname” #firstname – selects any element where id equals “firstname” Quick overview of CSS Selectorsīefore we proceed with this Java web scraping tutorial, it will be a good idea to review the CSS selectors: Note that not all the libraries support XPath. Good knowledge of HTML and selecting elements in it, either by using XPath or CSS selectors, would also be required. For managing packages, we will be using Maven.Īpart from Java basics, a primary understanding of how websites work is also expected. This tutorial on web scraping with Java assumes that you are familiar with the Java programming language. Prerequisite for building a web scraper with Java In the later sections, we will examine both libraries and create web scrapers. It is helpful in web scraping as JavaScript and CSS are not required most of the time. The good thing is that with just one line, the JavaScript and CSS can be turned off. HtmlUnit can also be used for web scraping. It is a way to simulate a browser for testing purposes. As the name of this library suggests, it is commonly used for unit testing. It can emulate the key aspects of a browser, such as getting specific elements from the page, clicking those elements, etc. HtmlUnit is a GUI-less, or headless, browser for Java Programs. The name of this library comes from the phrase “tag soup”, which refers to the malformed HTML document.

JSoup is a powerful library that can handle malformed HTML effectively. There are two most commonly used libraries for web scraping with Java- JSoup and HtmlUnit. In this article, we will focus on web scraping with Java and create a web scraper using Java. The problem is deciding which language is the best since every language has its strengths and weaknesses. Some of the popular languages used for web scraping are Python, JavaScript with Node.js, PHP, Java, C#, etc. Determining the best programming language for web scraping may feel daunting as there are many options.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed